From Prompts -> Context -> Harness Engineering: The Evolution of Building AI Agents

Agent = Model + Harness

The way we build with AI has changed three times in three years. Most people are still catching up to the second shift. The third one is already here.

The First Shift: Prompt Engineering

When ChatGPT arrived, everyone became a prompt engineer.

The game was simple: ask better questions, get better answers. Give the model a role. Break your task into steps. Add examples. Chain your thoughts. The better your prompt, the better your output.

This worked well for one-shot tasks. Ask, receive, done.

But something changed when we started building products with AI. Not just one-off queries, but systems that needed to reason across steps, remember things, and take real actions in real systems. Prompts alone were not enough.

The Second Shift: Context Engineering

By mid-2025, Andrej Karpathy made it explicit: context engineering matters more than prompt engineering.

The insight was simple but important. A model can only reason over what it can see. The real question is not just what do you ask but what does the model see at reasoning time.

Context engineering is everything that shapes the model’s input window: the system prompt, conversation history, retrieved documents, tool definitions, memory injections, and more.

If prompt engineering is the command “turn right,” context engineering gives the model the map, the road signs, and the terrain so it actually understands what turning right means in this situation.

At Salesforce, this is where a lot of Agentforce’s foundational design lives, grounding agents with the right CRM data, customer context, and business rules at reasoning time. Getting context right is what separates an agent that sounds helpful from one that actually is helpful in your specific business context.

But once agents started running autonomously in production, taking real actions across many steps in real enterprise systems, a new set of problems emerged. Problems that better context could not fix.

The Third Shift: Harness Engineering

Here is the thing: even with perfect prompts and perfect context, an autonomous agent will still go off the rails.

It might violate your company’s data access policies. Escalate a case it was supposed to resolve. Trigger an action in Salesforce that cannot be rolled back. Or confidently complete the wrong task entirely.

These are not context problems. They are environment problems.

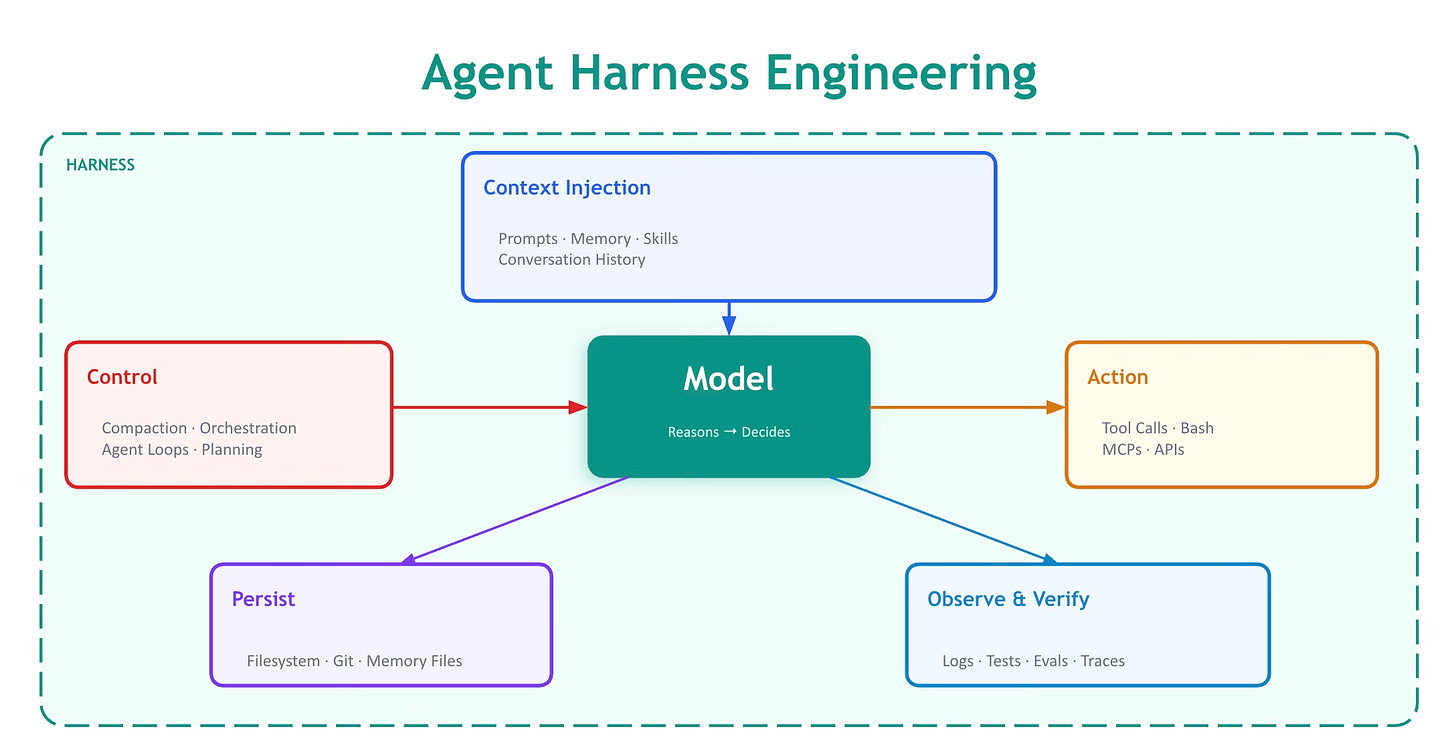

Harness engineering is the discipline of designing the environment around an agent: the constraints, feedback loops, scaffolding, and operational systems that keep it on track.

In the enterprise world, where agents touch customer records, financial data, and compliance workflows, the harness has to do all of that plus ensure trust, safety, and auditability. The stakes are higher and the harness has to be more deliberate.

Agent = Model + Harness

Here is the cleanest mental model:

If you are not the model, you are the harness.

Everything around the model, the code, configuration, tools, memory, execution logic, constraints, and feedback loops, is the harness. A raw model is not an agent. A model with a well-designed harness is a work engine.

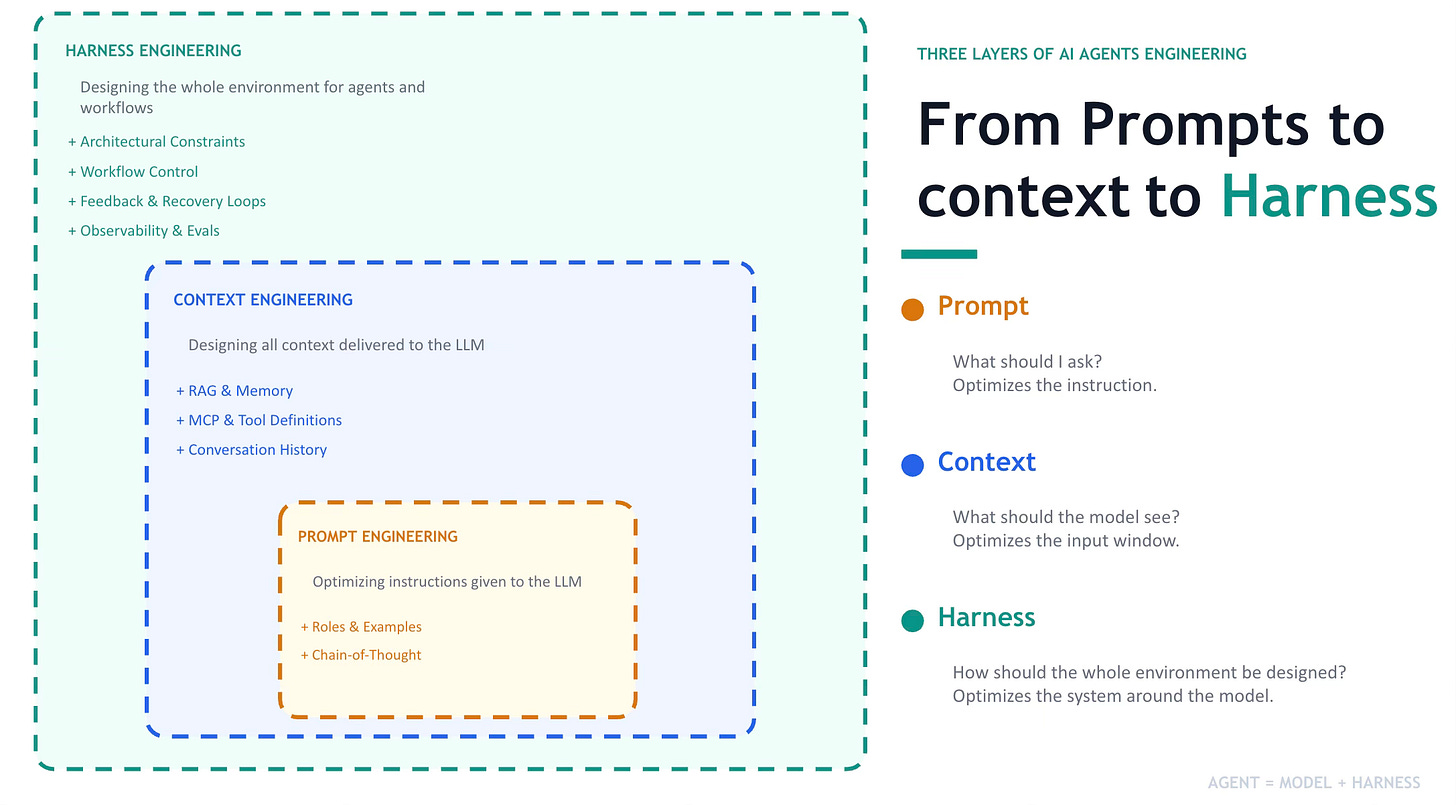

The three layers nest cleanly:

Prompt engineering asks: What should I ask? It optimizes the instruction.

Context engineering asks: What should the model see? It optimizes the input window.

Harness engineering asks: How should the whole environment be designed?It optimizes the system around the model.

Each layer solves a different class of problem. As agents take on more autonomous, long-horizon work, the harness layer matters more than the other two combined.

What Goes Into a Harness?

Working backwards from what agents need to do real work in production:

Durable State. Agents need to persist work across sessions and hand off cleanly between turns. In a CRM context, this means maintaining case state, conversation history, and task progress that outlasts a single interaction window.

Tool Execution. Rather than pre-building a rigid action for every scenario, give the agent the ability to compose and execute tools dynamically. In Agent, Tools and Actions define the agent’s action space. The harness determines how those are invoked, sequenced, and constrained.

Safe Execution and Guardrails. Agent-generated actions cannot run unchecked in enterprise systems. The Einstein Trust Layer in Agentforce is a concrete example of a harness-level safety primitive: masking PII, blocking unsafe outputs, enforcing data residency. All of it happens at the environment layer, not the model layer.

Memory and Grounding. Models only know what is in their weights and context window. In enterprise agents, this means connecting to live CRM data, knowledge bases, and customer history at query time. Data Cloud grounding in Agentforce is this layer.

Observability and the Feedback Loop. In production, the harness includes everything you use to understand what your agents are doing: traces, evals, session logs, and the feedback mechanisms that close the loop from agent behavior back to harness improvement. This is where most enterprise teams underinvest, and it is often the highest-leverage place to start.

.

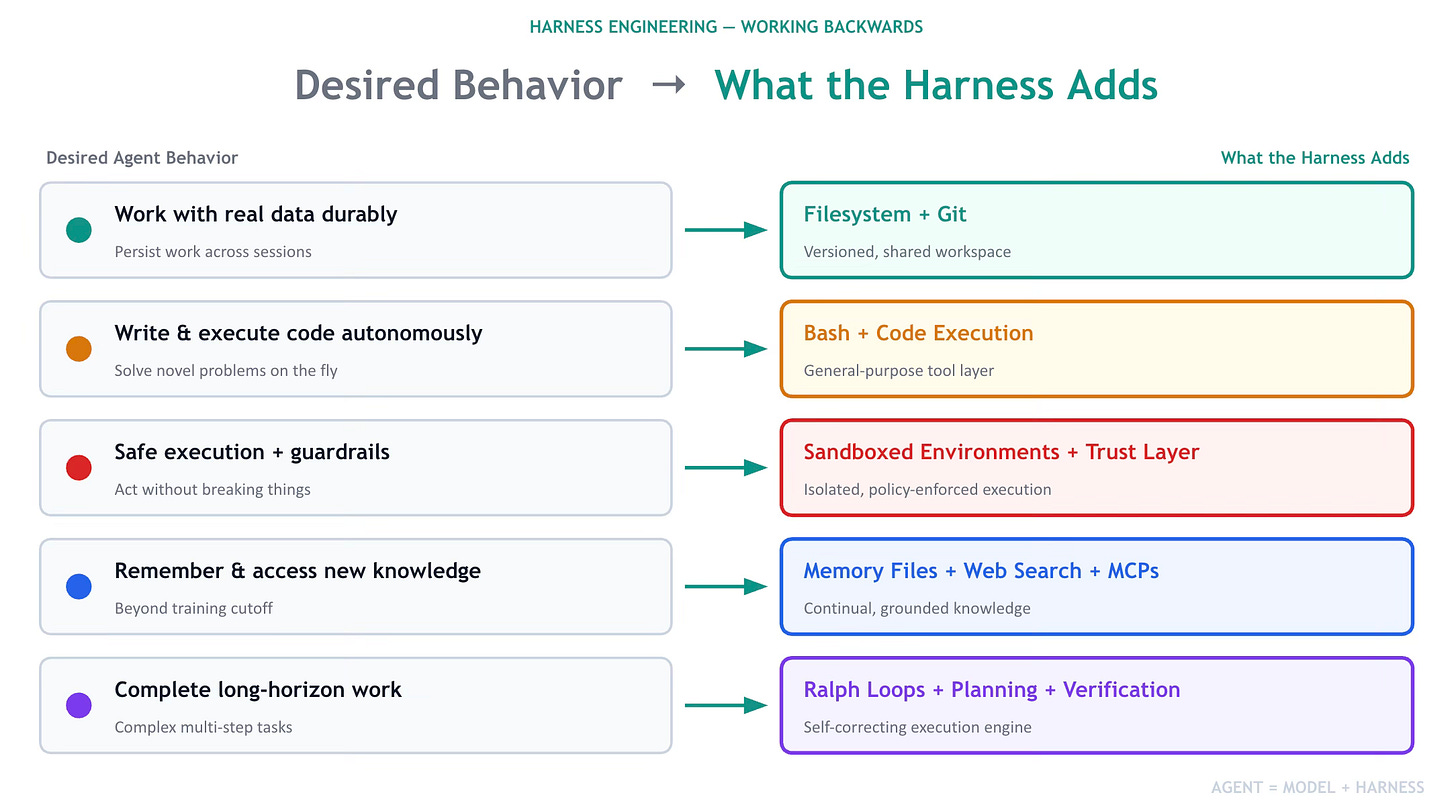

Working Backwards from Desired Agent Behavior to Harness Engineering

Harness Engineering is about guiding AI agents to behave the way we want. It lets humans add structure, rules, and context so the model can perform useful tasks more reliably. As models have improved, harnesses are also used to extend their abilities and fix limitations.

Instead of listing every harness feature, the key idea is simple:

Start with the behavior you want from the agent, then design the harness to enable that behavior.

Refereces:

1. https://blog.langchain.com/anatomy-of-an-agent-harness, 2) https://madplay.github.io/en/post/harness-engineering